An expanded first-author study investigating CBF-fALFF neurovascular coupling disruption across the first year post-injury in moderate-to-severe TBI. Applies five simultaneous analytical frameworks — whole-brain global, hemisphere-level, Yeo-7 functional networks, 68-region DKT atlas, and neighborhood vertex-wise — to characterize disruption patterns and their relationship to injury severity (PTA), neuropsychological outcomes, and lesion volume. Built on my MS thesis, expanded in scope and depth through ongoing Research Assistant work.

One of the first studies to examine neurovascular coupling in moderate-to-severe TBI at vertex-level spatial resolution. Computed CBF-fALFF coupling at 20,484 cortical surface points per subject across 121 imaging sessions (29 TBI × 3 timepoints + 34 controls). Found significant bilateral coupling disruption at 12 months post-injury and significant negative correlation between coupling and post-traumatic amnesia severity (PTA) at 6 months — supporting NVC disruption as a candidate non-invasive biomarker for TBI severity and recovery monitoring.

Patel, V. H. (2025). Vertex-based analysis of cerebral blood flow and fractional amplitude of low-frequency fluctuations (CBF-fALFF) coupling in moderate-to-severe traumatic brain injury during the first year post-injury [Master's thesis, The City University of New York]. CUNY Academic Works. https://academicworks.cuny.edu/gc_etds/6138/

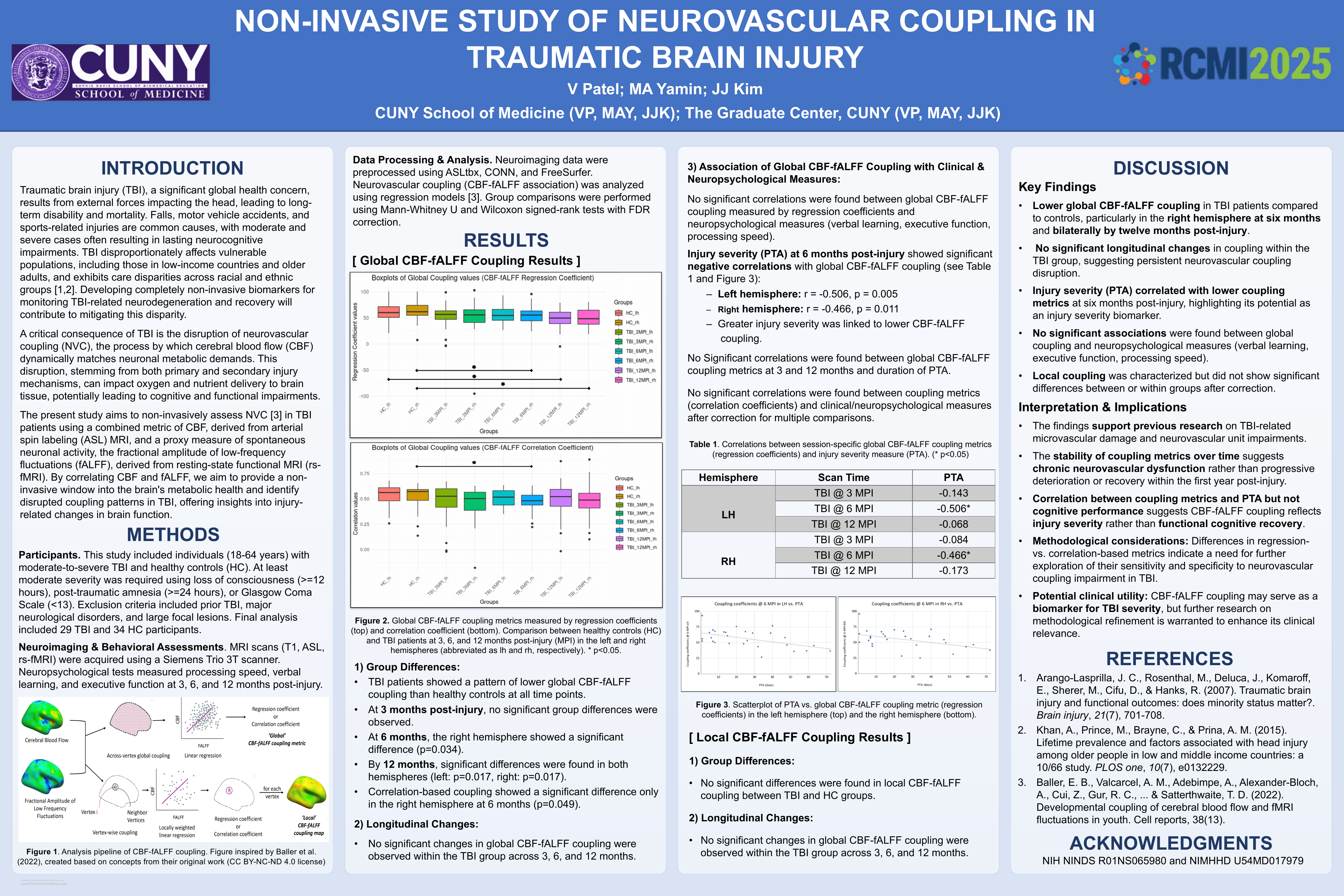

Presented at RCMI 2025. Co-authored with MA Yamin and JJ Kim (CUNY School of Medicine / Graduate Center). Examined CBF-fALFF neurovascular coupling in moderate-to-severe TBI — 29 TBI patients, 34 healthy controls, three timepoints. Found persistent hemisphere-level coupling disruption by 12 months post-injury and significant negative correlation between coupling and injury severity (PTA) at 6 months. Funded by NIH NINDS and NIMHHD.

Patel, V., Yamin, M. A., & Kim, J. J. (2025). Non-invasive study of neurovascular coupling in traumatic brain injury [Poster presentation]. RCMI 2025, New York, NY.